Predicting Animation Skeletons for 3D Articulated Models via Volumetric Nets

3DV 2019

Abstract

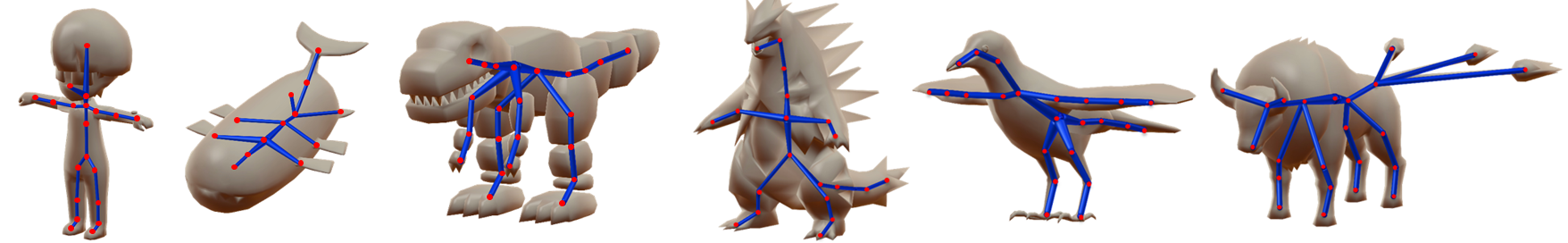

We present a learning method for predicting animation skeletons for input 3D models of articulated characters. In contrast to previous approaches that fit pre-defined skeleton templates or predict fixed sets of joints, our method produces an animation skeleton tailored for the structure and geometry of the input 3D model. Our architecture is based on a stack of hourglass modules trained on a large dataset of 3D rigged characters mined from the web. It operates on the volumetric representation of the input 3D shapes augmented with geometric shape features that provide additional cues for joint and bone locations. Our method also enables intuitive user control of the level-of-detail for the output skeleton. Our evaluation demonstrates that our approach predicts animation skeletons that are much more similar to the ones created by humans compared to several alternatives and baselines.

Paper & Supp.

AnimSkelVolNet.pdf, 3.98MBAnimSkelVolNet_supp.pdf, 88KB

Poster

3DV19-skeleton-poster.pdf, , 4.91MBSource Code & Data

Github code: https://github.com/zhan-xu/AnimSkelVolNet

Dataset: ModelsResource-RigNetv0

[News!] Check our latest project RigNet and the updated dataset ModelsResource-RigNetv1Citation

If you use our dataset or code, please cite the following papers.

@InProceedings{AnimSkelVolNet,

title={Predicting Animation Skeletons for 3D Articulated Models via Volumetric Nets},

author={Zhan Xu and Yang Zhou and Evangelos Kalogerakis and Karan Singh},

booktitle={2019 International Conference on 3D Vision (3DV)},

year={2019}

}

@article{RigNet,

title={RigNet: Neural Rigging for Articulated Characters},

author={Zhan Xu and Yang Zhou and Evangelos Kalogerakis and Chris Landreth and Karan Singh},

journal={ACM Trans. on Graphics},

year={2020},

volume={39}

}

Acknowledgements

This research is funded by NSF (CHS-1617333). Our experiments were performed in the UMass GPU cluster obtained under the Collaborative Fund managed by the Massachusetts Technology Collaborative.