3D Pose Estimation in the Wild

Below is an implementation of the 3D pose estimation algorithm described in the paper:

Action Recognition Using a Distributed Representation of Pose and Appearance,

Subhransu Maji, Lubomir Bourdev and Jitendra Malik,

In Proceedings, CVPR 2011, Colorado Springs.

pdf

For the action classification software go here: http://www.cs.berkeley.edu/~smaji/projects/action

The code is built on top of the poselets framework.

A Dataset for 3D Pose Estimation

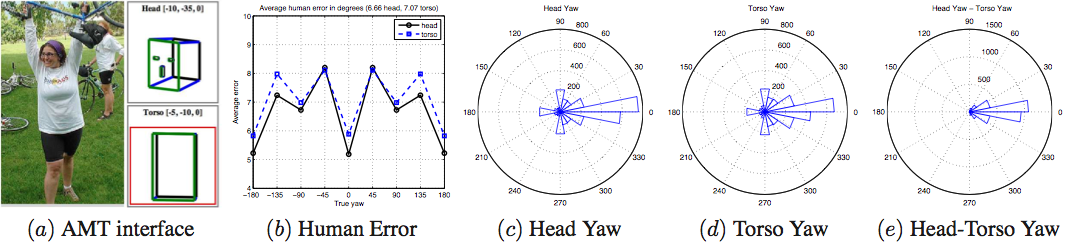

We collected 3D pose annotations on the PASCAL VOC 2010 validation dataset containing people using Amazon Mechanical Turk. These annotations were then cleaned up resulting in 3240 including reflections. The figure on top shows the AMT interface(a), the agreement between human subjects across views(b), as well as the distibution of the yaw in the dataset (c,d,e).

You can download the annotations + images here (annotations.tar.gz). Run view_annots.m to view these annotations.

You can also download these annotations in text format here (3dannots.txt). Each line contains one annotation specifying the bounding box and the yaw in the following format: image_name x_min y_min width height headyaw torsoyaw

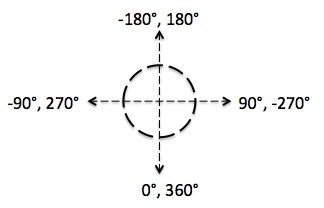

Yaw convention, 0 : front facing, -90 : right facing, +90 : left facing, -180/180 : back facing.

Note that left facing is when the person is facing his/her left side.

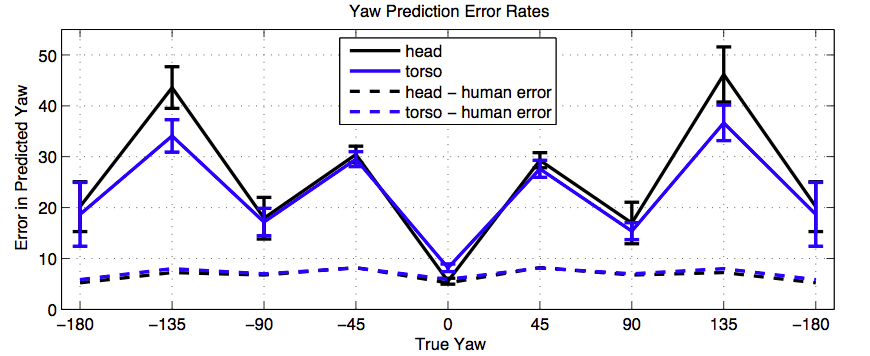

Below are the results of pose estimation using 10 fold cross validation (50-50 split). Note: For each split we make sure that both the image and its flipped version are both in either the training or test set.

Download and Instructions

Installation instructions:

- The code includes the poselet detection module. Make sure you can run it first by running the demo.m inside the poselet_detection folder. See here for more details.

- Run the demo3dpose.m file which estimates the 3D pose in the people specified in the image.

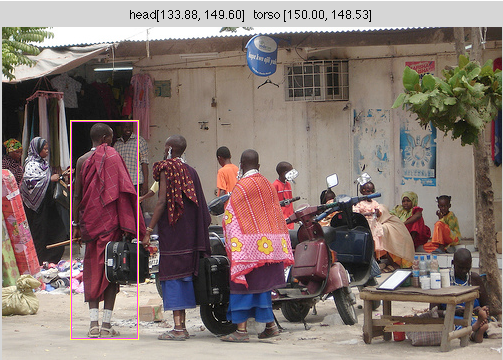

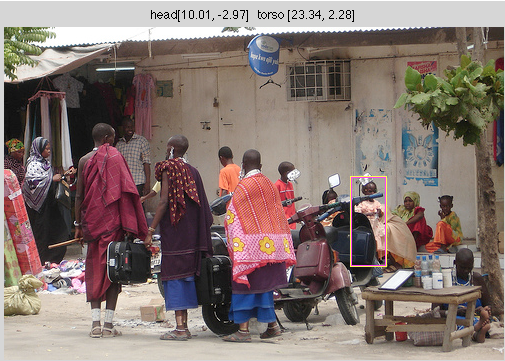

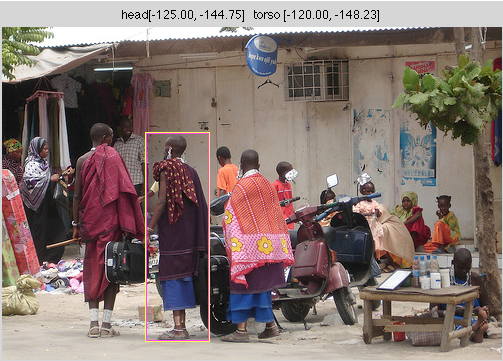

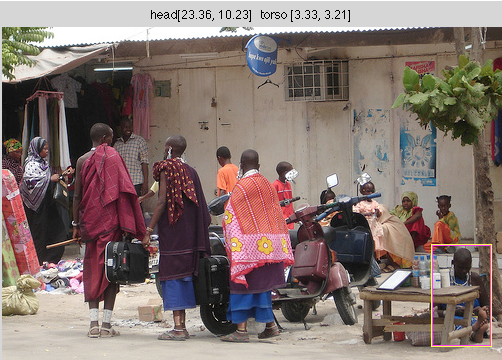

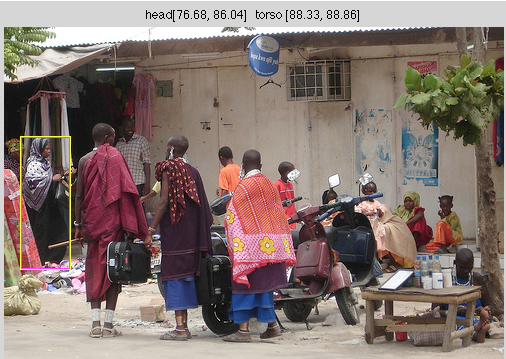

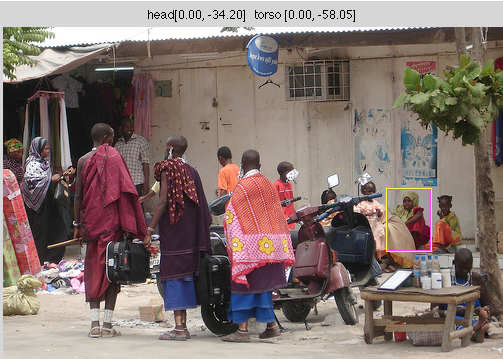

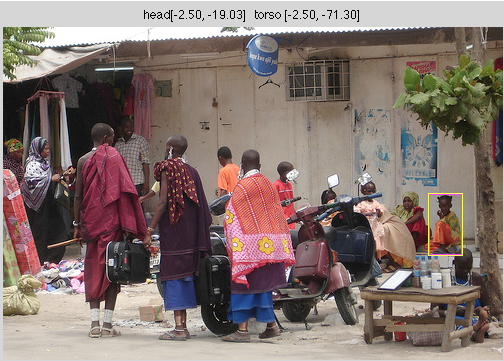

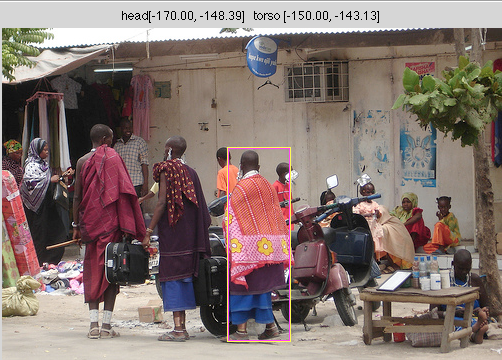

Below are the outputs of the program:

Each image shows a person with the bounding box, yaw of the head [true, predicted] and torso [true, predicted] in the figure title.

|  |

|  |

|  |

|  |

Last updated: March 26, 2011

Subhransu Maji